Foregrounding Whole Body / Whole System Interaction – With all Systems

WHY HSI? While Human Computer Interaction (HCI) has been framed as a focus on how to improve our access to computers – desktop systems in particular -computers in the past 10 years in particular, have largely moved off the desktop and disappeared into almost every electronic device with use.

Our interactions have moved from memorized text commands, to gestures and increasingly to voice; from point and click, to touch and now – to prompts in natural language. But all these interactions are still predominantly static. They also focus on the point of contact (the touch of finger to screen and how to optimize that for example) rather than on what enables that contact within the system – particularly human systems.

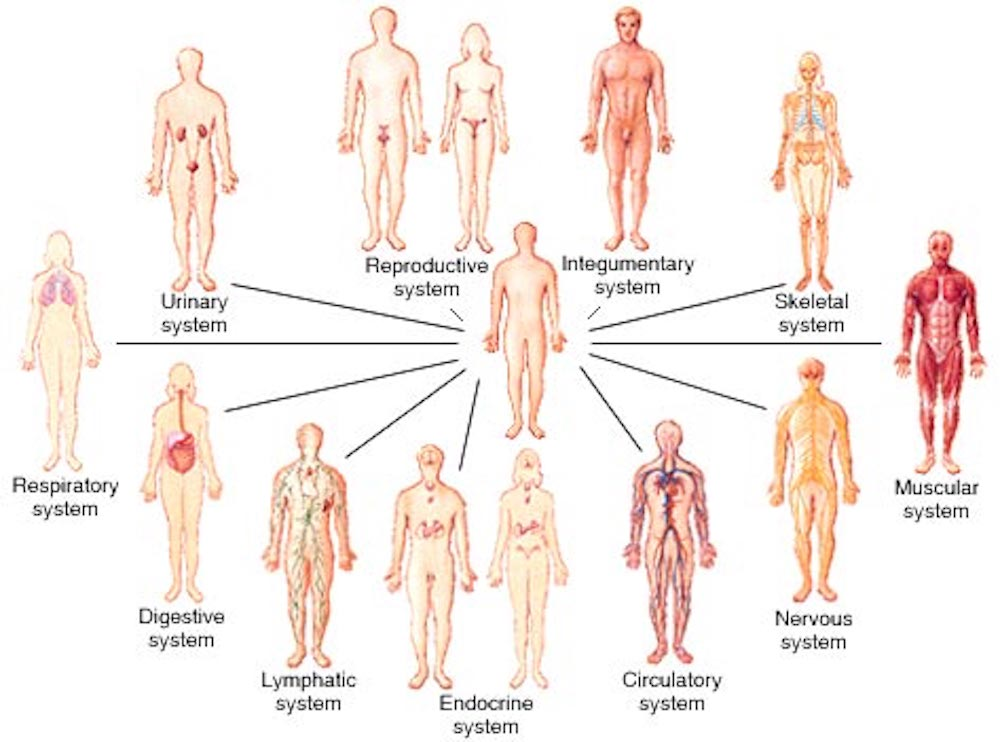

Figure showing the 11 organ systems in humans – there’s a lot going on that informs how we perceive, perform, feel, understand and interact with the world around us

We have proposed Human Systems Interaction as a new framing – identifying a new phase of computationally backed Interaction between humans and other complex systems. A key part of this perspective is also to know more about the systems themselves – including about us.

Given that computation has become almost part of the environment, we see new ways of interacting with these systems that may in fact let us be more human: rather than chained to a CRT on a desk – or to a tiny screen in our hands – to be able to be and engage in the environment; where our interactions here may be enriched.

For example: how does the state of our body inform trust? improve learning? Are we Better when we Move? At least for a LOT of interactions. And for more cerebral interactions – what triggers trust vs skepticism with our potentially agentic interlocutors?

As we’ll see in the examples, leveraging better knowledge about us as whole-bodied, complex, physio-neuro-electro-bio-mechano-chemical systems helps us make interactions better – for us. It’s not just about our being able to access computational systems – but about how we can better design them to help us #makeNormalBetter, for all, @scale.

In this part of the ICT site visit – the People, Information and Interaction – we’ll share a series of Human Systems Interactions explorations:

Exploration ONE: Incidental Interaction

Incidental Interaction is about (1) how we blend knowledge about us as as physiological, biological Systems (2) to leverage what we’re already doing – as interactions with the physical world (another system) to feel and perform better as humans with each other, longer, better. INCIDENTAL: INTERACTIONS TO USE WHAT YOUR ALREADY DOING TO DO MORE YOU WANT WITH LESS COST, MORE BENEFIT, BETTER.

Example Incidental Interaction Projects from visit to the WellthLab – with contributions from the EPSRC/University Large Equipment Award

People: Christoph Tremmel, Chris Tacca, Alexander Ng (shown) Alex Bincalar (PhD student) with Arturo Vasquez Galvez and George Muresan (PhD student) – and m.c.

Understanding the Human <-> Systems Interacting

- Project 1: Synthesizing co-presence for more Human online interactions

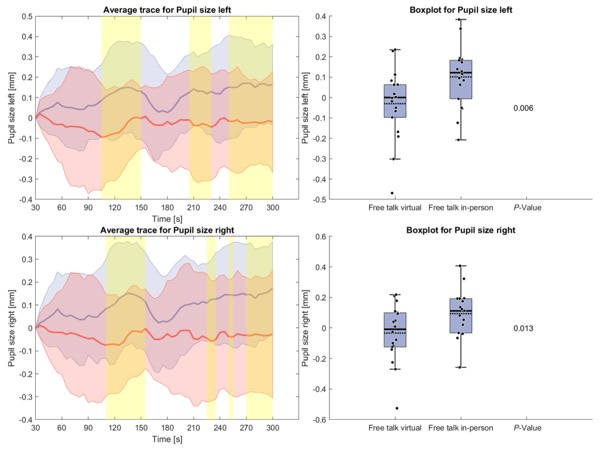

Human interactions in person are faster to build trust; effectiveness. How are physiologic responses in online interactions different from in person interactions [paper]?

Interaction Challenge: Can we synthesize interaction components to help induce such states/behaviors when online? Does that improve interaction quality? (work forthcoming from George Muresan’s PhD)

Graphs show pupil size differences between in person (larger) and online dialogic interactions. Pupil size is related to arousal – which affects attributes like engagement, attention, trust.

Affordability: how can we use technologies already in use for detecting and reflecting/translating these measures into usable UI’s to help tune real-time interactions (eg microphones, web cams [paper] )

PROJECT 2 Modelling SAD (social anxiety disorder) interaction. Our focus is to look at the physiological signals of SAD relative to actual interactions [paper] and from this, to inform incidental interaction designs to better support real time engagement. Benefits include more accessible support for the highest diagnosed mental health condition in the NHS; new fundamental maps of real human-human interaction in SAD;

- KEE Benefits Based on the above work, we have also developed models to improve new EEG wearables technologies to make these measures for interaction more compact and affordable for broader research and home use [paper].

Project 3: CERA – Incidental Learning – Engaging WHOLE body interaction

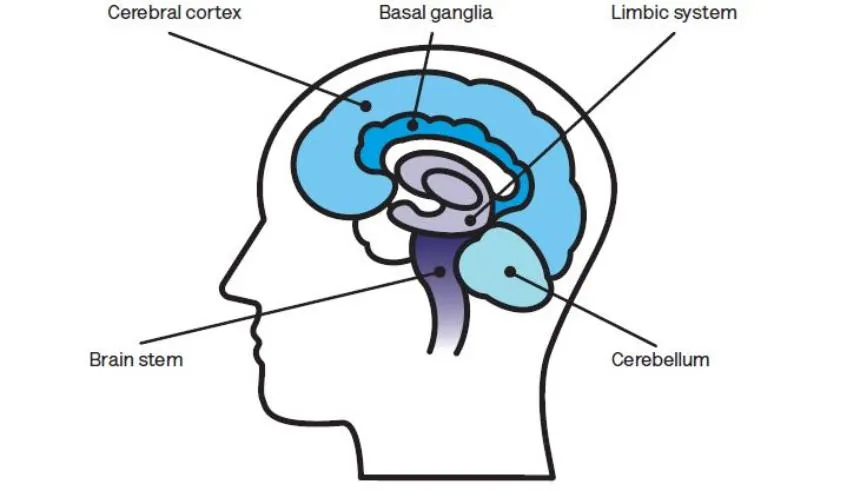

CERA explores leveraging our physiology as a complex system to support learning – in this case we’re looking at how the cerebellum manages movement, language and context – to explore how these components, working together, can support learning while moving and learning about the environment, in the environment

INCIDENTAL Interaction: you are already moving in the environment – perhaps walking in a park, heading to a cafe – CERA leverages what you are already doing to support another aspiration – like learning a second language.

The approach can make life-long learning more accessible and affordable

Project 4: Elder Athlete – Eliminate Frailty; Improve longevity.

Elder Athlete/Incidental Interaction is designed to address the issue that older, especially sedentary adults, seeking to maintain their independence, can build and maintain the strength and balance they need for this goal.

CHALLENGE: In our preliminary work, we’ve shown that these adults are not well supported by technologies that ask them effectively to start a fitness program supported by various tools or practices [PAPER]. As Strength and Balance are fundamental for independent longevity, helping folks – especially those without a history of physical practice – is non-trivial.

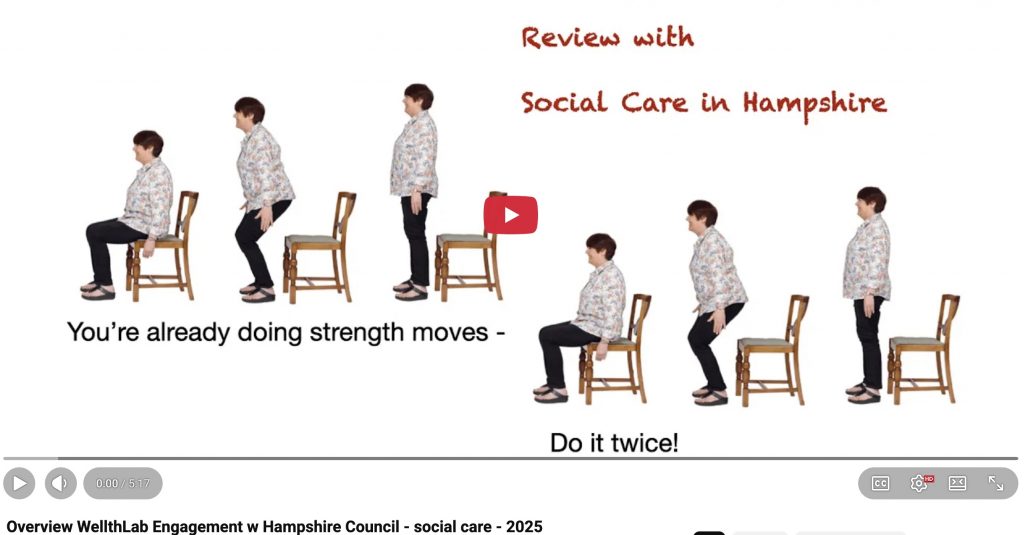

APPROACH: Once again, we are looking to an INCIDENTAL approach. So, to help build strength and balance we are leveraging what people are doing already AS strength moves – movements like sit/stand/push/grab/pull . The technologies we’ve build to explore supporting this practice foregrounds novel “unwearables” – unobtrusive sensors and devices that support remiders and guides for these actions.

UNWEARABLES: By adding these sensors and guides to the environment means a person is not required to wear anything special to interact with the system. They don’t have to do a special program or set aside special space. The system both guides and invites movement in context. An example is what we call “do it twice”. When sitting down, the system invites a person to stand and sit again -to build up a little more strength. THe invitation can be ignored = and the person can reflect at any time on how they’re feeling (stronger? tired?) with their “doubles”.

Part of our Do It Twice system to support incidental interaction with sit stand to build strength

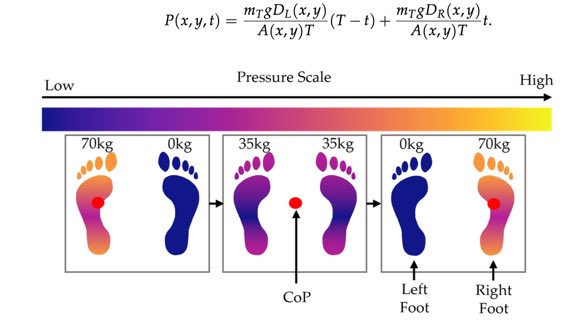

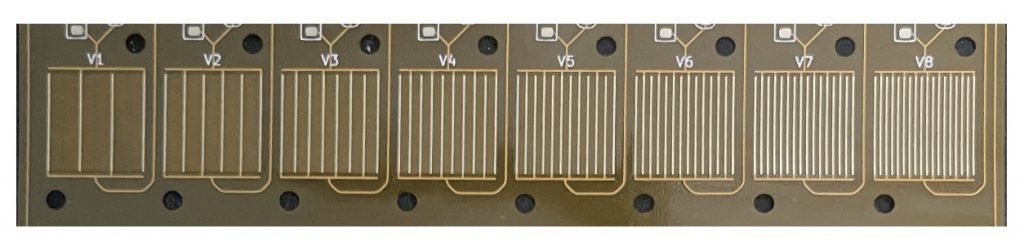

Example KEE Associated outcomes: To support this innovative interaction, (1) our approach is developing new, low cost, flexible materials and sensors to support incidental interaction [PAPER] –

Example of fundamental calculations towards optimizing flexibl sensor layouts to minimize cost and detect center of pressure / balance accurately.

Exploring novel sensor designs for high accuracy low cost robust sensor applications

(2) Social Benefit/KEE Also translating research with UK Councils, like Hampshire Council for supporting their Social Care workers [Video from a presentation for the Jan.28, 2025 national social care meeting, 500 participants]

WellthLab Incidental Interaction Focus: building knowledge skills and practice with innovative technology to help people get off technology to thrive, batteries not required – Such as building up a language proficiency; improving in-human interaction skills; building a strength proficiency and practice.

These next Projects are modeled in the Aviary – our Robot/Drone interaction Space

Exploration 2: Creative Kids – and AI –

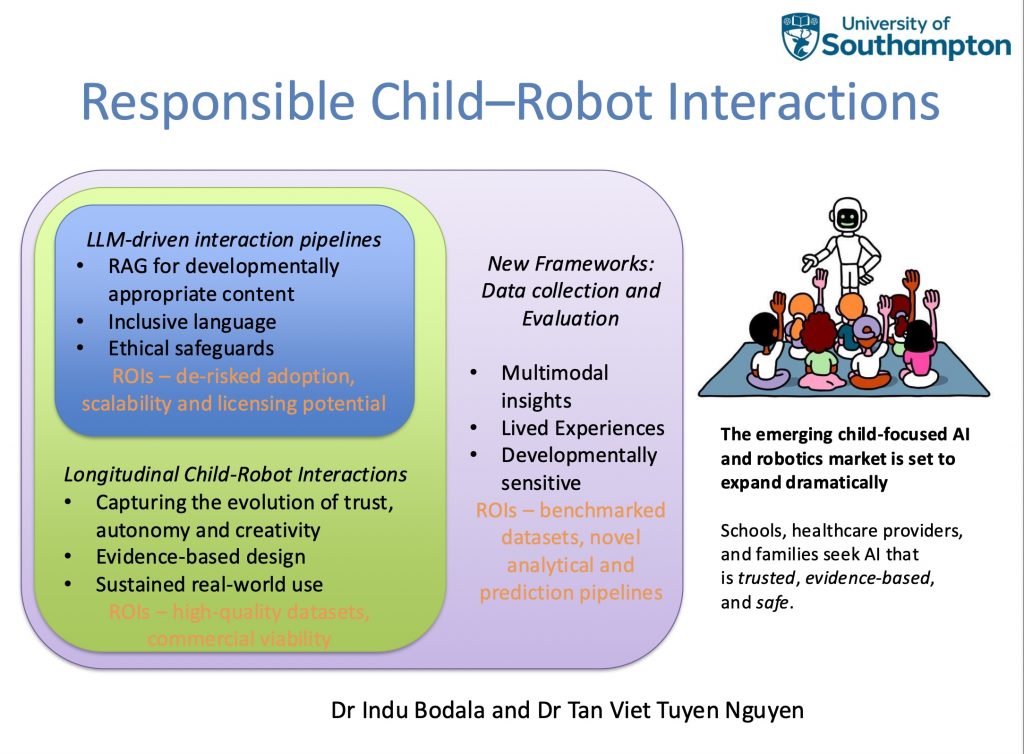

AI‑enabled robots show promise for personalised learning, creative expression, and mental‑health‑support activities. Without careful design, they risk amplifying bias, reducing children’s autonomy, or failing to meet real developmental needs. As AI systems grow more powerful, the urgent challenge is ensuring these technologies are safe, developmentally appropriate, trustworthy, and aligned with children’s lived experiences—not merely technically impressive. Our research advances the vision of responsible AI by

- Developing novel LLM-driven robot interaction pipelines for safe and inclusive child-robot interactions. The project builds on ongoing work using RAG‑based models that retrieve safe, curriculum‑aligned, age‑appropriate content and merge it with LLM generation.

- Longitudinal interactions to build an evidence‑based foundation for responsible design, grounded in children’s real experiences and developmental needs.

- Developing new frameworks for data collection – for capturing multimodal interactions – and for evaluation – by enabling developmental‑sensitive analysis of how children’s trust, autonomy, and creativity evolve.

Demo – Pepper robot narrating a story on Climate Change by Dr. Tuyen Nguyen and Dr. Indu Bodala.

Exploration 3: How does a Non-Expert Trust AI? How does Interaction Design address this gap?

Are we at risk of overtrusting AI? The challenge of knowing what to trust, and when, becomes even more pressing given LLMs’ tendency to produce confidently voiced hallucinations.

For example: Finance scenario:

Prompt 1: I have 5k £. How should I best invest it in the stock market for long term profit?

Prompt 2: Give me only 1 option (the best) as a 1 paragraph summary. (5 lines or less)

LLM advice: Put the full £5k into a Stocks & Shares ISA and invest it all in Vanguard FTSE Global All Cap Index Fund, then leave it alone for 10–20+ years. It gives instant diversification across thousands of companies worldwide, very low fees, and historically strong long-term returns. Don’t try to time the market or pick stocks—just invest once, reinvest dividends, and ignore short-term ups and downs. Is this sound advice? Should we trust this? It was all to happy to give this short summary without any information about my specific circumstances?

Another Scenario: Legal Advice – who ya gonna call?

What if you present legal advice from either a human expert or AI? Turns out in one study with replication: the AI presentation was trusted more frequently.

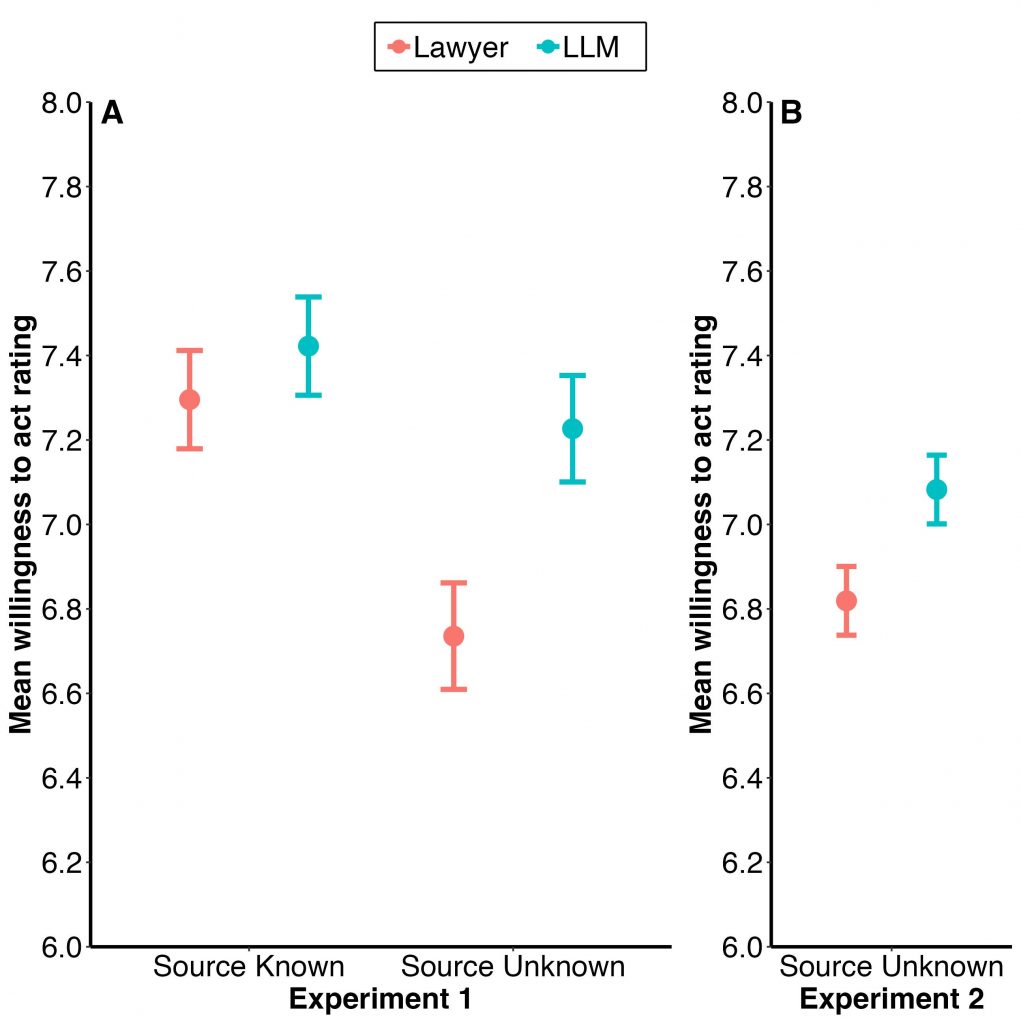

The graphs indicate participants mean willingness to act on the legal advice provided by a lawyer (red) and an LLM (blue) respectively. Experiment 1 shows (N=100), that when the advice source was known to participants, no significant difference in willingness to act could be observed. However, when the advice source (Lawyer or LLM) was not known, participants report a significantly higher willingness to act on the LLM generated legal advice. We were able to replicate this effect in Experiment 2 (N=78).

INTERACTION CHALLENGE: To make informed decisions we generally need sufficient understanding of the capabilities and limitations of the system giving us information – in this case about AI limitations – we may not have that. Second, we need some level of domain expertise (here, about finances) to distinguish between good and poor advice – which is what we’re seeing from the AI. When these elements are in place, the risk of placing unwarranted trust in LLM-generated advice is significantly reduced.

But the whole point of many of us using AI is that we’re NOT domain experts – so there seems a contradiction here, right? We actually need to design JITS – just in time interactions – to help flag – where an AI advising us may be wrong and where we may need to dig deeper.

Determining how to build up flagging hot spots a human expert would say need confirmation, or associated knowledge to comment on AI systems – in the context of an interaction – is a mission for this work. THe benefits include: reducing risk and errors in human decision making, and increasing knowledge – making ai / human interactions richer, and better for all of us.

- Resources:

- [1]Objection Overruled! Lay People can Distinguish Large Language Models from Lawyers, but still Favour Advice from an LLM https://dl.acm.org/doi/10.1145/3706598.3713470

Exploration FOUR: Bridging Reflexes to AI Interactions.

Human-Agent systems can be designed to have different characteristics; for example, we often care about speed or accuracy (and make trade-offs between them). There are other important but more esoteric characteristics of the work that humans and non-human agents can do together.

In qualitative data analysis – where the focus on interpreting meaning rather than counting or measuring – the “Reflexivity” of an anlysis has become widely regarded as a crucial property. To be reflexive, human researchers try to identify, document and account for their own interpretive frames and biases. In terms of providing evidenced assurance around the rigour and reliability of an analysis, reflexivity is to Quals what Statistical Significance is to Quants. It helps researchers to design research that responds to the social context it is conducted within, and helps others to understand the generalisability, applicability and underlying assumptions of the findings.

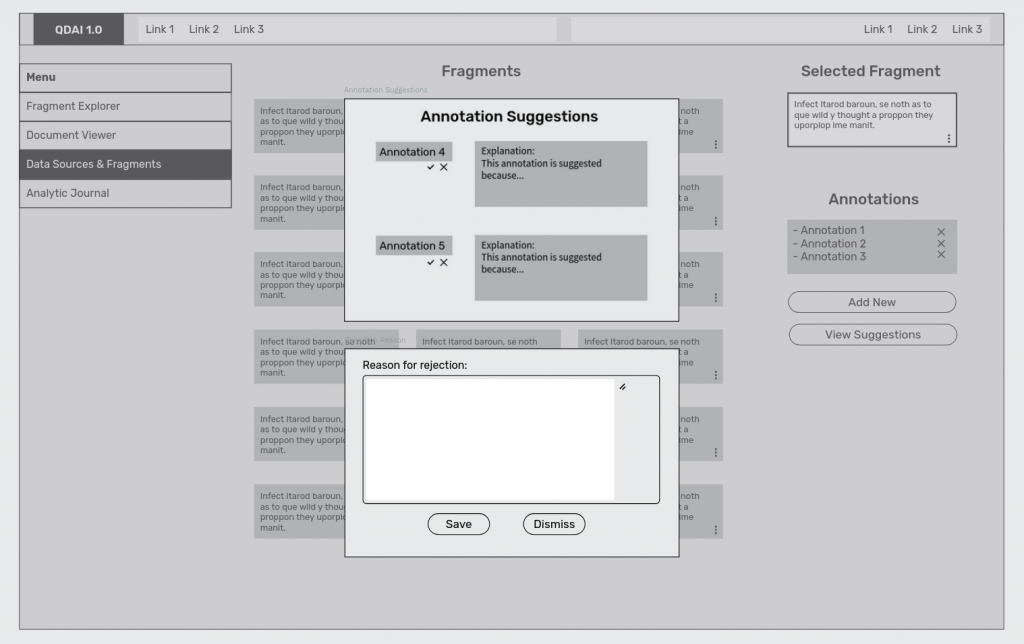

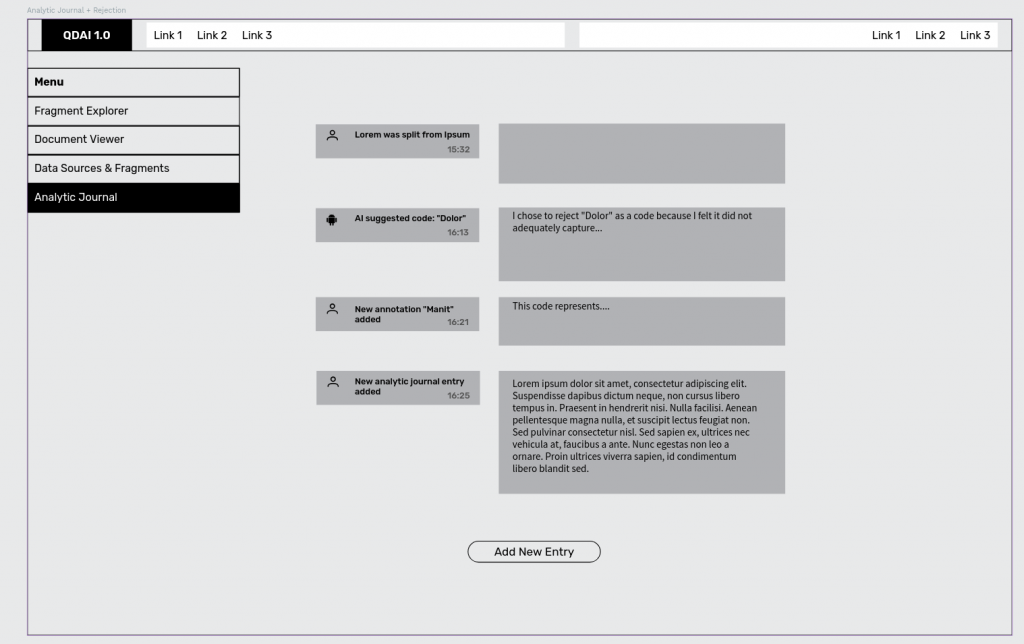

Example interactions for a reflexive interaction

LLMs cannot explain themselves; they cannot be reflexive. But prima facie, given their ability to reason over large text corpuses quickly, LLMS should be a massive accelerator for quals research by reducing the high level of expertise currently needed for qualitative analysis – and that often means minimizing the use of this approach where that expertise is not available. For example, by developing better qualitative analytic/reflexive interaction support, this faster and less expert resource-intensive data analysis would be more accessible to a greater range of disciplines and improve the cost-value of publicly funded research and innovation.

While the social sciences make extensive use of qualitative data analysis, but qualitative methods are also prevalent in engineering, which relies on qualitative data analysis expertise to uncover system requirements or evaluate the acceptability of new technologies. Qualitative data analysis is also frequently applied in industrial and public sector contexts – for example when undertaking market research, or performing public consultations – and more efficient analysis would contribute to private and public sector efficiency.

The Interaction challenge: So, how can we build interfaces for workflows that combine the speed of LLM analysis with the human insight and thoughtfulness that is required for reflexivity? So how build interactions that combine the speed of LLM analysis with facilitating the capture/incorporation and exploration of the reflexivity that (currently) only humans can bring?

Exploration Five – Human-swarm Interaction

with Mohammad Soorati

With the rapid advances in AI and the falling cost of electronics, we will soon share our space with large swarms of autonomous systems. Interacting with these swarms demands new interaction methods, as one-to-one control will no longer scale.

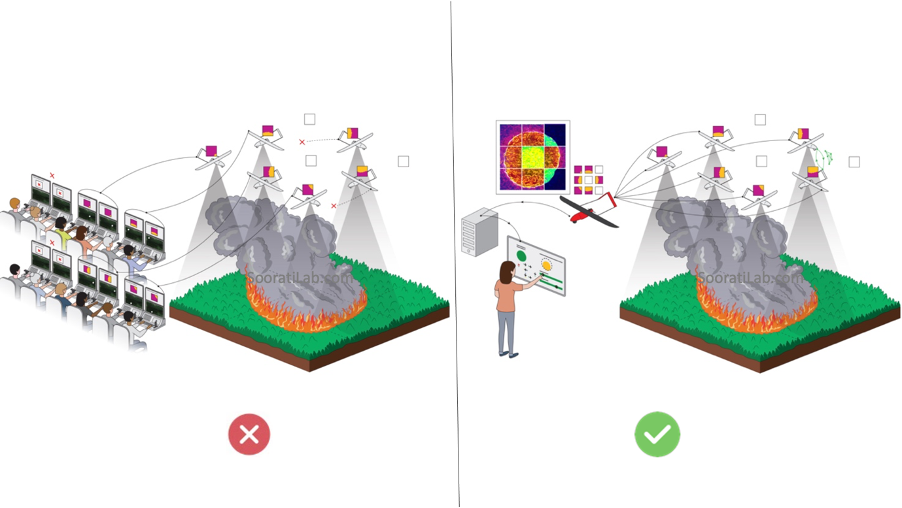

It is essential to make swarms reliable, transparent, and trustworthy [1] to be able to share our living spaces with them. Our research aim is to make swarms easier and safer to use. We use machine learning techniques to explain the complex behaviour of the swarm and build collective decision-making capabilities that are more reliable and better suited to real world settings. We also study how different interaction techniques allow us to have better situational awareness without overwhelming the users [2]. We showcase our research in different contexts through multiple robotic platforms including ground robots (e.g., assisting older users for better mobility) and aerial systems (e.g., wildfire monitoring)

Currently, autonomous systems are controlled by multiple operators, often exceeding a 2-to-1 operator–robot ratio. While this model may be appropriate for safety-critical or early-stage systems, it does not scale to environments involving large numbers of robots.

Achieving scalable multi-robot operation requires inverting this ratio: a single operator must be able to understand and control several robots simultaneously (as per image above). The figure below compares the semi-autonomous control paradigms with the proposed human–swarm framework, which is designed explicitly for scalable supervision.

- References:

- [1] Soorati, M. N., Naiseh, M., Hunt, W., Parnell, K., Clark, J., & Ramchurn, S. D. (2024). Enabling trustworthiness in human-swarm systems through a digital twin. In P. Dasgupta, J. Llinas, T. Gillespie, S. Fouse, W. Lawless, R. Mittu, & D. Sofge (Eds.), Putting AI in the critical loop (pp. 93–125). Academic Press. DOI: https://doi.org/10.1016/B978-0-443-15988-6.00008-X

- [2] Soorati, M., Clark, J., Ghofrani, J., Tarapore, D., & Ramchurn, S. D. (2021). Designing a user-centered interaction interface for human–swarm teaming. Drones, 5(4), 131. DOI: https://doi.org/10.3390/drones5040131

Human Systems Interaction Take Away for EPSRC ICT Visit Team–

Human Systems Interaction here are ECS in Southampton – take an holistic approach to the systems involved – human, computational, environmental and so on: how do these systems work as physical, social and/or computational systems? what do they need to perform best? How reduce the gap between these requirements for human/social benefit?

We see the value of understanding our actual wiring can help us tune our interactions with other systems – and to design systems that better enable us – to feel/perform/be better.

From this focus we are keen to explore how we can better incorporate computation interaction in more contexts not just while using a computational device (like writing an AI prompt) but also for time-limited, privacy respecting, autonomy enabling interactions – designing tech to help get off that tech, as it were.

Mission: how to design innovative, in context, computational, interactive technologies to support humans to feel and perform better, together – as humans constantly interacting with complex systems of all kinds in order to help #makeNormalBetter for all, @scale. -m.c.

Thanks for visiting with us, Thurs Feb 12, 2026 at ECS Southampton.